Buzz Haven: Your Source for Trending Insights

Stay updated with the latest buzz in news, trends, and lifestyle.

Machine Learning: When Algorithms Get a Mind of Their Own

Discover how machine learning algorithms are reshaping our world and what happens when they think for themselves! Dive in now!

Understanding the Basics of Machine Learning: How Algorithms Learn and Adapt

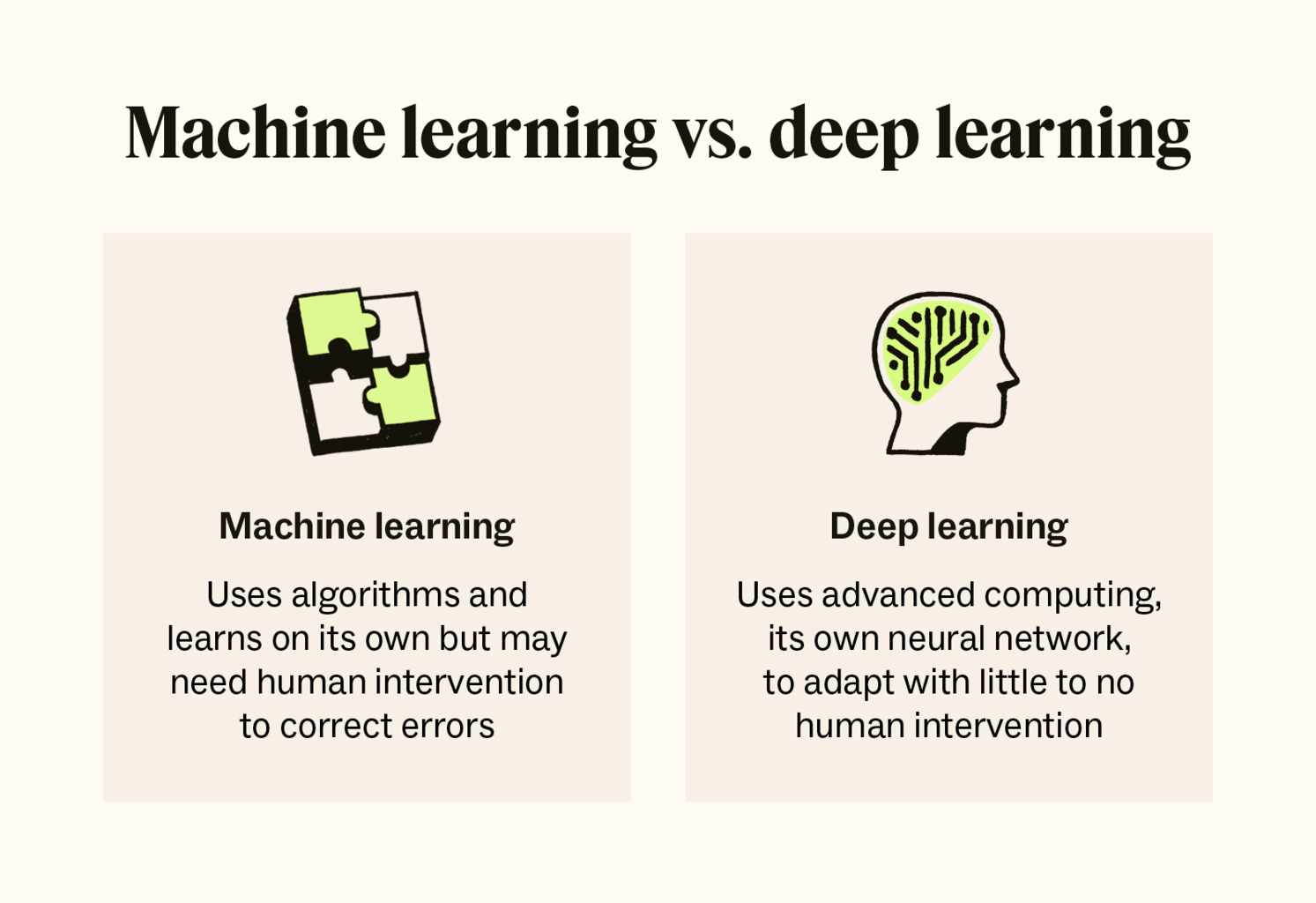

Machine Learning is a subset of artificial intelligence that allows algorithms to learn from and adapt to data without explicit programming. At its core, machine learning involves feeding large amounts of data into a model, which then identifies patterns and makes predictions or decisions based on that data. There are various types of algorithms, each suited for different tasks, including supervised learning, unsupervised learning, and reinforcement learning. Supervised learning algorithms are trained on labeled data, allowing them to make predictions, while unsupervised learning algorithms identify hidden patterns within unmarked data. This adaptability is what makes machine learning a powerful tool in today's data-driven world.

As these algorithms learn, they continuously improve their performance based on feedback and new data. The process typically involves three essential steps: training, validation, and testing. In the training phase, the model learns from the dataset, while the validation phase fine-tunes the model's parameters to enhance accuracy. Finally, the testing phase evaluates the model's performance on unseen data, ensuring that it generalizes well to real-world scenarios. Understanding these basics of machine learning is crucial for anyone looking to harness its capabilities, whether for personal projects or professional applications.

The Ethical Implications of Machine Learning: When Algorithms Make Decisions

The rise of machine learning has transformed various sectors, but it raises significant ethical implications as algorithms increasingly make decisions that affect human lives. For instance, in areas such as healthcare and criminal justice, algorithms can influence patient treatment options or sentencing recommendations. These decisions, often based on vast datasets, can inadvertently perpetuate existing biases if the underlying data is flawed or not representative. It is crucial to consider who is accountable when these systems malfunction or produce discriminatory outcomes, as the lines between human judgment and machine decision-making begin to blur.

Moreover, the ethical implications of machine learning extend to privacy concerns and the autonomy of individuals. As algorithms analyze personal data to enhance user experience, they can also infringe on privacy rights and manipulate behaviors. The lack of transparency in how these algorithms operate makes it challenging for individuals to understand the implications of their data being used in this manner. Thus, it becomes essential for developers, policymakers, and society at large to engage in discussions about the responsible use of technology, ensuring that ethical considerations are at the forefront of machine learning practices.

Are We Ready for AI: Exploring the Risks and Rewards of Autonomous Algorithms

As we stand on the brink of a new technological era, the question remains: Are we ready for AI? The rise of autonomous algorithms promises a plethora of benefits, including increased efficiency and enhanced decision-making capabilities across various industries. However, with these rewards come significant risks. Ethical concerns, such as bias in AI decision-making and the potential for job displacement, loom large. It's crucial for society to consider how we can harness the power of AI while mitigating its downsides. The future of artificial intelligence hinges on our ability to strike a balance between innovation and responsibility.

To thoroughly navigate this complex landscape, we must engage in conversations surrounding the ethical use of AI and the governance of autonomous systems. Exploring the risks associated with autonomous algorithms can lead to the establishment of guidelines that protect users and uphold justice. Here are some key considerations to keep in mind:

- Accountability - Who is responsible for decisions made by AI?

- Transparency - Can users understand how AI systems reach their conclusions?

- Security - How do we protect against malicious use of AI technology?